Azure Load Balancer – Azure Traffic Manager

Introduction Of Azure Load Balancer

Microsoft Azure, formerly known as Windows Azure, is Microsoft’s public cloud computing platform that provides a variety of cloud services such as analytics, computing, networking, and storage.

The user can use these services to run existing applications or to build and scale new ones in the public cloud. Load balancing in Azure provides a higher level of scale and availability by extending incoming requests across multiple VMs (virtual machines). A load balancer can be created using the Azure portal.

In layman’s terms, an Azure Load Balancer is a cloud-based system that allows a group of machines to function as a single machine to serve a user’s request.

Simply put, a load balancer’s job is to accept client requests, determine which machines in the set can handle them, and then forward those requests to the appropriate machines.

There are numerous load balancers and algorithms that process requests at different layers of the TCP/IP stack.

Need for a Load Balancer

- A public load balancer will load-balance the incoming internet traffic to your virtual machines. Load balance the traffic across virtual machines inside a virtual network.

- From an on-premises network in a hybrid scenario, you can also get to a load balancer front end. A configuration known as the Internal Load Balancer is utilized in both scenarios.

- You can also port forward traffic to a particular port on particular virtual machines with inbound NAT(network address translation) rules.

- Azure load balancer enables you to offer outbound connectivity for virtual machines inside your virtual network by utilizing a public load balancer.

Load Balancer Resources

Load balancer resources are the objects that allow you to express how Azure’s multi-tenant infrastructure should be programmed to achieve the scenario that you want to create. There is no direct link between the actual infrastructure and load balancer resources. A load balancer does not create an instance, and capacity is always available.

A load balancer’s resources are either internal load balancers or public load balancers. A front end, a health probe, a rule, and a backend pool definition are the functions of load balancer resources. You place virtual machines in the backend pool by specifying the backend pool from the virtual machine.

Features of a Load Balancer

For UDP and TCP applications, a load balancer offers the following capabilities.

Creating a Load-Balancing Rule

You can use Azure Load Balancer to create a load-balancing rule that distributes traffic from frontend pool instances to the backend. The load balancer uses a hash-based algorithm to rewrite flow headers to the backend pool and distribute inbound flows. A server is ready to receive new flows when a health probe notifies a healthy backend endpoint.

To map flows to available servers, the load balancer uses a 5-tuple hash containing the source port, source IP address, destination port, destination IP address, and IP protocol number by default. You can develop an affinity to a specific source IP address by selecting a 2- or 3-tuple hash for the given rule. All packets from the same packet flow arrive on the same instance at the load-balanced front end. When the client initiates a new flow from the same source IP, the source port is changed. As a result, the 5-tuple may redirect traffic to a different backend endpoint.

Transparent and Application agnostic

Load Balancer does not directly interact with UDP, the application layer, or TCP, and it can support any UDP or TCP application scenario. The load balancer does not initiate or terminate flows, does not provide an application layer gateway function, does not interact with the flow’s payload, and all protocol handshakes occur directly between the backend pool instance and the client. A virtual machine response is always in response to an inbound flow. When the flow arrives on the virtual machine, the original source IP address is also preserved.

Health probes

The load balancer determines the health of instances in the backend pool using the health probes that you define. When a probe does not respond, the load balancer stops directing new connections to the unhealthy instances. Current connections are unaffected and will remain active until the application obstructs the flow, the virtual machine is shut down, or an idle timeout occurs. Load balancer provides various types of health probes for HTTPS, HTTP, and TCP endpoints.

Automatic reconfiguration

When you scale instances up or down, the load balancer instantly reconfigures itself. When virtual machines are added or removed from the backend pool, the load balancer is reconfigured without any additional operations on the load balancer resource.

Outbound Connections

All outbound flows to public IP addresses on the internet from private IP addresses in the virtual network can be translated to the load balancer’s front-end IP address. When the public front end is connected to a backend virtual machine as part of a load balancing rule, outbound connections are automatically translated to the public frontend IP address as specified by Azure. Outbound connections can provide more information on this.

How to Create and Manage a Standard Load Balancer Using the Azure Portal?

Load balancing increases scale and availability by distributing incoming requests across multiple virtual machines. In this section, we will create a public load balancer to assist with virtual machine load balancing. Only a standard public IP address is supported by the standard load balancer. When you create a standard load balancer, you should also create a new standard public IP address that will be used as the standard load balancer’s frontend. Create an Azure load balancer, create virtual machines and install IIS server, create load balancer resources, view a load balancer in action, and add and remove VMs from a load balancer.

Pricing

The cost of using a standard load balancer is determined by the amount of processed outbound and inbound data as well as the number of configured load-balancing rules. Standard load balancer pricing can be found on the load balancer pricing page.

SLA

You can check the load balancer SLA page for more information about the standard load balancer SLA.

Limitations of a Load Balancer

A load balancer is a UDP or TCP product that provides port forwarding and load balancing for these IP protocols. Inbound NAT and load balancing rules are supported for UDP and TCP but not for other IP protocols such as ICMP. The load balancer is not a proxy and does not interact, respond, or terminate with the payload of a TCP or UDP flow.

Unlike public load balancers, which offer outbound connections when transitioning to public IP addresses from private IP addresses within the virtual network, internal load balancers do not translate outbound originated connections to the internal load balancer frontend because both are in private IP address space. When no translation is required, the possibility of SNAT port exhaustion in the isolated internal IP address space is eliminated.

The side effect here is that if the outbound flow from a virtual machine tries a flow to the internal Load Balancer front-end in which pool it stays and is mapped back to itself in the backend pool, both legs of the flow do not match and the flow fails. The flow will succeed even if it does not map back to the same virtual machine in the backend pool that created the flow to the frontend. When the flow is remapped, the outbound flow appears to start from the virtual machine to the frontend, and the corresponding inbound flow appears to start from the virtual machine to itself.

Microsoft’s Azure Load Balancer supports load balancing for cloud services and virtual machines in order to scale applications and protect against the failure of a single system, which could result in unanticipated downtime and service disruption.

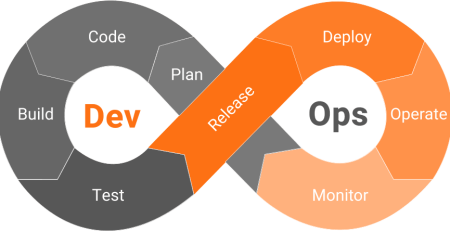

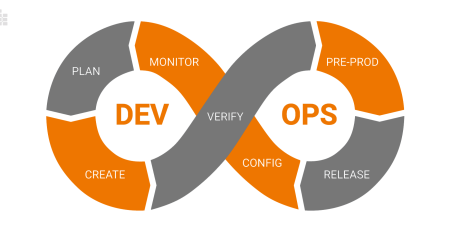

In Microsoft Azure, there are three types of load balancers. Azure Load Balancer, Internal Load Balancer (ILB), and Traffic Manager are the three.

Internal Load Balancer

- The Azure Load Balancer is considered as a TCP/IP layer 4 load balancer, which uses the hash function on the source IP, source port, destination IP, destination port, and the protocol type to proportionately balance the internet traffic load across distributed virtual machines.

- While the Azure Load Balancer utilizes the hash function to allocate the load, the traffic, however, for the source IP, source port, destination IP, destination port, and the protocol type goes to the same endpoint in the course of a session.

- If a user closes the session and begins a new session, the traffic generating from the new session might be guided to a separate endpoint than that of the previous session, but all of the traffic coming from the new session will be led to the same endpoint.

- The hash function distribution in the Azure Load Balancer leads to an arbitrary endpoint selection, which over time creates an even distribution of the traffic flow for both UDP and TCP protocol sessions.

- The Internal Load Balancer is an Azure Load Balancer that has only an internal-facing Virtual IP. This essentially means users cannot apply an Internal Load Balancer to balance a traffic load coming from the Internet to internal input endpoints.

- The Internal Load Balancer implements load balancing only for virtual machines connected to an internal Azure cloud service or a virtual network.

- If the Internal Load Balancer is deployed within the Azure cloud service, then all the nodes that have been load balanced need to be a part of the cloud service.

- In case the Internal Load Balancer is used inside a virtual network, then all the nodes that have been load balanced must be connected to the same virtual network.

Azure Traffic Manager

The Traffic Manager load balancer is an Internet-facing solution for balancing traffic load between multiple endpoints.

To direct traffic to Internet resources, the Traffic Manager employs a policy engine and a set of DNS queries. The Internet resource can be housed in a single data center or multiple data centers located around the world.

Azure Traffic Manager

Traffic Manager is only involved in determining the initial endpoint. It is not involved in the redirection of each and every packet, so it cannot be considered a standard load balancing engine.

The Traffic Manager incorporates three different types of load balancing algorithms.

1. Performance Algorithm – Directs the user to the nearest load-balanced node depending on latency.

2. Failover Algorithm – Typically directs the user to the principal node, except if the principal node is down, it redirects the client to a backup node.

3. Round Robin Algorithm – Implements a distributed approach to direct users to a node, using the weights attributed to such nodes.

The Azure load balancer algorithm is deployed by the Traffic Manager, which is defined by a set of ‘intelligent’ policies, with each Traffic Manager URL resource connected to the same set of intelligent policies.

The Traffic Manager also includes the ability to disable or enable endpoints in its policy engine without shutting them down. This gives you the ability to add or remove endpoint positions as needed, as well as easily manage endpoint maintenance.

Conclusion

Users who require multi-tier applications with global accessibility and scalability can use Traffic Manager’s ‘performance’ load-balancing algorithm to redirect clients to the closest endpoint. This endpoint could be an Azure Load Balancer VIP, which is a user interface-tier load balancing across aggregated nodes.

Every user interface-tier node can use an Internal Load Balancer, which distributes the load of a middleware-tier across multiple nodes. Every middleware tier should be linked to a ‘SQL Always On Availability Group’ data tier, which deploys a 3rd Party Appliance for SQL listener implementation.

Whatever your load balancing requirements are, whether they are for a single-tier or multi-tier virtual machine or cloud service, Microsoft Azure Load Balancer can provide flexible tailor-made solutions specifically designed for your deployment environments.

Leave a Reply