Cloud savings with autoscale on Azure

Unused cloud resources can drain your computing budget unnecessarily, and unlike legacy on-premises architectures, there is no need to over-provision compute resources for peak usage.

Autoscaling is a value lever that can help you unlock cost savings for your Azure workloads by automatically scaling up and down the resources in use to better align capacity to demand. For dynamic workloads with inherently “peaky” demand, this practice can significantly reduce wasted spend.

Workloads with sporadic high peak demand may have extremely low average utilization, making them unsuitable for other cost-cutting strategies such as rightsizing and reservations.

Autoscaling adds resources to handle the load and meet service-level agreements for performance and availability when an app places a higher demand on cloud resources. And, during times when load demand is low (nights, weekends, holidays), autoscaling can remove idle resources to save money. Autoscaling scales between the minimum and maximum number of instances and will automatically run, add, or remove VMs based on a set of rules.

Autoscaling is a near-real-time cost optimization technique. Consider this: instead of building an addition to your house with extra bedrooms that will go unused for the majority of the year, you have an agreement with a nearby hotel. Your guests can check in at any time, and the hotel will automatically charge you for the days they stay.

Not only does it take advantage of cloud elasticity by paying for capacity only when it is required, but it also reduces the need for an operator to constantly monitor system performance and make decisions about adding or removing resources.

What services can you autoscale?

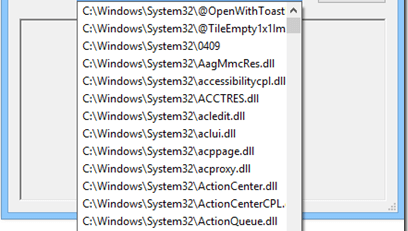

For most compute options, Azure provides built-in autoscaling via Azure Monitor autoscale, including:

- Azure Virtual Machines Scale Sets

- Service Fabric

- Azure App Service

- Azure Cloud Services has built-in autoscaling at the role level.

Azure Functions differs from the previous compute options in that no autoscale rules must be configured. The hosting plan you select determines how your function app will be scaled:

- With a consumption plan, your functions app will scale automatically, and you will only pay for compute resources when your functions are running.

- With a premium plan, your app will automatically scale based on demand using pre-warmed workers that run applications with no delay after being idle.

- With a dedicated plan, you will run your functions within an App Service plan at regular App Service plan rates.

Azure Monitor autoscale offers a unified set of autoscaling capabilities for virtual machine scale sets, Azure App Service, and Azure Cloud Service. Scaling can be done on a set schedule or based on a runtime metric like CPU or memory usage.

If the platform’s built-in autoscaling features meet your needs, use them. If not, think about whether you really need more complex scaling features. Additional requirements may include greater control granularity, different methods for detecting trigger events for scaling, scaling across subscriptions, and scaling other types of resources.

It is important to note that the design of an app can have an impact on how it handles scaling as the load increases. Design scalable Azure applications—Microsoft Azure Well-Architected Framework goes over design considerations for scalable applications, such as choosing the right data storage and VM size, and more.

Also, in general, scaling up is preferable to scaling down. Typically, scaling down entails deprovisioning or downtime. When a workload is highly variable, choose smaller instances and scale out to achieve the required level of performance.

Autoscale can be configured using the Azure portal, PowerShell, Azure CLI, or the Azure Monitor REST API.

Get started with autoscaling

You can use autoscaling to dynamically scale your apps to meet changing demand or anticipate loads with different schedules, as well as set rules that trigger scaling actions. Regardless of how you set it up, the goal is to maximize your application’s performance while saving money by not wasting server resources.

Leave a Reply